https://tabbforum.com/videos/crypto-trading-technology-for-quants-ilya-gorelik-deltix/

Update: Generating Alpha with Earnings Date Revisions

Research Update

This study is an update of the research using data from Wall Street Horizon that we first published on our blog in November 2015. At that time, we had data from January 2006 to September 2015. Now we have data to March 2017. We continue to be impressed by the stability of the returns.

We showed our approach for this study in our webinar on June 8th as an example of alpha research on our new DeltixQuantHub platform.

Introduction

Company earnings are the bedrock of financial analysis and investment. Sell-side, buy-side and independent research analysts perform quantitative and qualitative analysis of companies, their peers and their markets in order to provide guidance for short-term earnings and earnings growth for in-house use or for clients. Innovations in earnings analysis over the last few years have included crowd-sourced earnings estimates (e.g. Estimize) and sentiment derived from the news and social media (e.g. Ravenpack, Social Market Analytics). The overriding objective of company analysis has been and still is to forecast as accurately as possible a company’s future earnings and so guide asset allocation and trading decisions.

In this study, we looked at whether earnings announcement date revisions can be used for predicting future prices in a manner that could be profitably traded upon.

We reviewed the research papers of Joshua Livnat (http://www.wallstreethorizon.com/livnat) and Eric So (http://www.wallstreethorizon.com/So), both of whom look at whether changes in the earnings announcement dates can be used to generate returns.

Research Methodology

We conducted our study using our own research software, TimeBase and QuantOffice. In the webinar, we will show how this and other research can be back-tested in DeltixQuantHub, with full control over the parameters used in the study. Below are the steps we followed for this research:

- We populated TimeBase with WSH daily snapshots of company future earnings announcement dates for S&P500 stocks for the period January 3, 2006 to March 31, 2017.

- Corresponding with this time period, we also populated TimeBase with market data for those stocks. In production deployment of Deltix software, a time series of tick data is automatically recorded from real-time streaming market data. Whilst we used one-minute bar data for our research in this study, recording streaming tick data allows Deltix users to use any periodicity of data for their research.

- We now had a base data set, now with 11 years of data, on which to apply and test our ideas. Quant researchers use Deltix to express their model ideas as “strategies” in QuantOffice. Now with DeltixQuantHub, researchers will be able to publish their research to others and allow real interaction with the strategy in back-test.

- In the studies by Joshua Livnat and Eric So referenced above, both found that companies who advance their earnings dates generally outperform companies that delay their earnings dates. So we started with this premise and then developed the theme with advanced statistical techniques implemented in QuantOffice.

- The resulting model was back-tested, modified and back-tested iteratively. Again, in QuantHub, users will be able to run their own back-tests and test different parameter values.

- Where the earlier studies from Livnat and So modeled a holding period spanning from shortly after the change in date to the actual announcement, we took positions the day before an earnings announcement and sold them the day after, resulting on a holding period of less than 24 hours.

- In order to isolate the calendar date effect from any general market effect (although our holding period was less than a day), we also implemented a dollar-neutral version of the strategy. This was a simple extension to the trading strategy the effect of which can be isolated by a simple parameter.

Results

Our results supported the findings of the previous researchers. Specifically, we found:

- The most likely positive returns occurred when the earnings announcement date was advanced (i.e. brought forward) in the second half of the quarter.

- Conversely, the most probable negative returns occurred when earnings announcement date was delayed in the first half of the quarter.

For both hedged and un-hedged versions of the strategy, for the period January 2006 to March 2017, the back-tested strategies showed Sharpe Ratios of 1.96 (unhedged) and 1.99 (hedged) with average profit per share of 10 cents and 8 cents respectively. By comparison, in the prior study covering the period from January 2006 to September 2015, the back-tested strategies showed Sharpe Ratios of 2.08 (unhedged) and 2.12 (hedged). Average profit per share remained unchanged.

As such, we continue to conclude that there are profitable opportunities from trading with signals derived from WSH earnings date announcement data.

The full results are included in our research paper.

You can also review the prior study.

DeltixQuantHub

In our June 8th webinar, we demonstrated how our new DeltixQuantHub platform allows users to analyze research strategies, including changing parameter values and run an array of back-tests. In this way, non-technical users can interact with the trading strategy directly.

We are very excited by DeltixQuantHub as a means of connecting strategy designers, researchers and potential traders/portfolio managers who may or may not be in the same organization. You can register to view the webinar recording here.

To get more information about the products used to conduct this research, follow the links below:

Deltix’s 2017 Mission and Roadmap

Our mission this year is driven by two observations:

Our Alpha Generation Software Has Reached Maturity

Observation 1: Our core product suite for the buy-side (QuantOffice and QuantServer) has reached the state of functional maturity.

The core functionality of QuantOffice and QuantServer has been in use for over 10 years now and battle tested by more than 200 clients. During the last few years, the number of requests for new features and enhancements from our clients has been gradually declining and we are happy and proud to acknowledge that based on our communications with clients and prospects, the latest release of QuantOffice and QuantServer software provides a mature balance of power and flexibility such that practically any functional requirement of intelligent trading can be addressed by utilizing the current capabilities.

Mission Accomplished

Of course, we are continuing to maintain and improve the product. In addition, there are always new trading venues and data providers that we continue to add. But we’re proud to announce that we’ve accomplished our mission to offer a solution for the ongoing process of:

- Developing and testing new alpha generation strategies

- Production deployment of these strategies

- Constantly refining and optimizing existing trading strategies.

Increasing Demand for Optimizing Order Executions

Observation 2: We are witnessing an increasing demand, from both the buy-side and sell-side clients, for tools to design, back-test, optimize and deploy in production advanced order execution algorithms. We also see demand for advanced execution analytics and reporting.

Improving Execution in Forex and Futures

Execution algos have long been deployed in equities trading. Take up of algo execution of equities, particularly smart order routing, really picked up after the Reg NMS (US) and MIFID (Europe) regulations of some ten years ago. These regs created market fragmentation and obligations for best execution (soon to be strengthened in Europe by MIFID II). Credit Suisse led the way in introducing the buyside to various types of algos.

In the absence of these regulatory drivers, algo execution in the futures and forex markets lagged. Starting maybe two years ago, driven by the explosion in execution venues (forex) and generally poor trading returns (futures), algo execution has increasingly been adopted in forex and futures trading.

Today, whilst there are important differences between asset classes, buyside traders (and hence the sell-side service providers) across equities, futures and forex are looking for assistance in improving execution quality, for executions completed both with algos and without.

Continuous Improvement in Execution Quality

Improvement is typically based on measuring execution quality (traditionally called Transaction Cost Analysis – TCA). However, more important than ex post measurement is how to (continuously) improve execution. In essence, the task at hand is to choose the best method of execution for the particular purpose (and in the case of equities and forex, the best location). This may or may not require an execution algo but it does require a continuous deterministic approach to execution. The determination of the best execution method is, based on our discussions with our buy-side and sell-side clients, of fundamental importance because of the financial rewards of getting it right.

We recently discussed forex order execution in an article in e-Forex magazine. This article goes into detail about how data recording and advanced analytics can be used to collect and constantly evaluate time series data with all the layers of historical orders, executions and order books from multiple liquidity providers. This approach provides the necessary granularity and a level of precision in execution decisions not available in traditional trading systems. Click here to download the article.

Building Advanced Tools to Improve Order Execution

As a result of these observations, our focus in 2017 is to provide buy-side and sell-side clients with the most advanced tools to improve order execution. We view this as fundamentally an opportunity to deploy computer science with a clear measurable objective of decreasing execution costs. To address the demands of large-scale order execution optimization, we are rolling-out new technology for massive, cloud-based, distributed back-testing which will enable practically unlimited throughputs measured in billions of messages per second. To address the requirements for ultra-low latency and high availability, we are developing the next generation of our Execution Server technology.

Advanced Analytics Demands Highly Skilled Computer Scientists

Successful systematic alpha generation and order execution is not easy. It requires precision. Implementing precision in the context of massive fast-moving data sets requires highly advanced computer science which in turn requires highly skilled computer scientists. Which Deltix has, and we hired an additional 15 in 2016 bringing our headcount to 65. Over 50% of our headcount is in R&D.

A Recent Market Development Enabling Advanced Analytics

The CME’s recent launch of “Market by Order” (MBO), so called Level 3, data is a very exciting development which will allow for the ultimate in precision of order execution. Leveraging such data will require software of the highest order and we are looking forward to deploying our solution to utilize MBO data with early adopters.

We will continue to demonstrate improvements in execution, as well as alpha generation ideas, in our own research which we publish on this blog.

To an exciting and successful 2017.

Optimizing Order Execution Using Advanced Execution Analysis

Examining Execution Transaction Costs in LME Metals

Transaction Cost Analysis (TCA) has traditionally been used to examine costs of order executions between different brokers, demonstrate best execution and provide other compliance-based functions. More recent applications of TCA involve forensic analysis of executions. This deeper analysis helps firms improve execution quality in the context of a specific trading strategy. To reflect the deeper analysis and internal focus, we call such studies Advanced Execution Analysis (AXA).

Research Methodology

Over the summer, we embarked on a study with our client, the London-based broker, Marex Spectron. The purpose of the study was to examine different methods of executing orders of six metals on the London Metal Exchange (LME) using an Advanced Execution Analysis approach.

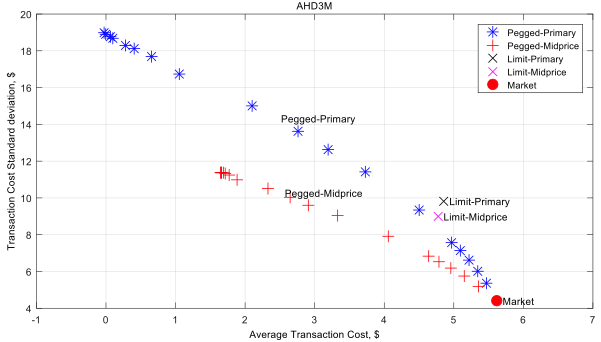

Using Arrival Price (mid-price between bid & offer) as the benchmark, the study looked at whether increasing passivity can reduce transaction costs versus a market order. In addition to market orders, we tested variations in passivity via four different types of limit orders:

- Limit-Primary Places a limit order at the primary price level (bid for buy orders, offer for sell orders). If this order is not filled after 10 seconds, replaces the limit order with a market order. We use FIFO methodology for the order queue.

- Limit-MidPrice Places a limit order at the mid-price of the bid and offer, rounding down for buys and up for sells. We assume that our order is the first at that price level. Again, if the order is not filled after 10 seconds, we replace the limit order with a market order.

- Pegged-Primary Places a limit order at the primary price level and replaces on bid/offer updates. If the order is not filled over various time scales (from 1 second to 1 hour), it is replaced by a market order.

- Pegged-MidPrice Places a limit order at the mid-price and replaces on bid/offer updates. Again, if the order is not filled over various time scales (from 1 second to 1 hour), it is replaced by a market order.

Marex Spectron provided LME tick data for the six metals for the period March 1 to May 13 2016. This data together with corresponding market depth (order book) data and volume profile data was loaded into Deltix TimeBase. The different execution methods described above were implemented in Deltix QuantOffice and back-tested against the market depth data. The following statistics were computed:

- Transaction Cost (i.e. The difference between the order execution price and Arrival Price).

- Standard Deviation of Transaction Costs

- Average Time to Fill

- Limit Order Fill %

Results

Defining risk as the standard deviation of transaction costs, we found that:

- Across all evaluated strategies, the Market execution method has the highest expected transaction cost and the lowest risk associated with it.

- When using peg intervals of short duration (up to 30-60 seconds depending on the market), the Pegged-MidPrice execution method provides both lower expected transaction costs and risk compared to the Pegged-Primary execution method.

- Only Pegged-Primary (and not Pegged-MidPrice) demonstrates steady improvement of the transaction cost when using peg intervals of longer duration (above 60 seconds). However, this improvement comes at the cost of additional risk.

An example of results displayed graphically is shown below:

(Source: Marex, Deltix, LME)

The full study is available here.

Practical Considerations

As with any research, the results of one study are useful in providing general direction. However, the results would be significantly more useful if the research is repeated on an ongoing basis. This allows researchers to use market and trade data from different time periods, which will hopefully validate (or possibly repudiate) their results. At a minimum, ongoing research would help to refine their strategies.

For example, we are continuing this research by introducing price prediction heuristics into the pegged order execution methods. The goal is to reduce the risk of the Pegged-Primary method so we can capture the benefit of reduced transaction cost with less downside risk (i.e. standard deviation of transaction cost).

However, any price prediction technique is subject to the danger of curve-fitting. The on-going research approach described above extends the number and scope of out-of-sample testing periods to provide more reliable results.

Ideally, executions are analyzed in real-time. This way, researchers can analyze deviation of transaction costs versus benchmarks in the context of a rolling historical window (say from three months ago to real-time). With such information, traders can immediately identify divergences and execution anomalies compared to the performance of recent executions. This allows them to make necessary adjustments to ongoing executions or pending orders.

As we have discussed before, the holy grail is having fully adaptive execution algos which change their behaviour in real-time in response to real-time market data and actual performance. But the first step is to move from traditional TCA to ongoing detailed research and analysis of executions, so we can incorporate those findings into current trading decisions.

You can download this research study here.

More Insight on How To Do Execution Analysis

This research study is focused on advanced execution analysis rather than alpha generation. We’ll be publishing results of more execution research in 2017, so stay tuned.

In addition, over the summer, we published a couple of articles about approaches for doing advanced execution analysis. Here is a brief blog post discussing the advantages of recording your own market data for execution analysis. Here’s an article from Stuart Farr on DIY Execution Analysis published by CTA Intelligence.

If our research on signal generation is more relevant, you might find these research studies useful:

If you’d like to learn more about the platform used to conduct this research, visit our website or contact us.

Generating Alpha Using IPO and Secondary Issue Data

The markets are currently in a risk averse state, at least in part as a consequence of rapidly-evolving geopolitical issues and slowing market growth in China. As a result, the IPO and secondaries markets slowed in late 2015 and have remained sluggish in the first quarter of 2016. Nonetheless, companies will still need access to the capital markets this year, even if it is not at a frenzied pace.

Trading IPOs and Secondaries

Developing a strategy for trading IPOs and secondaries is not trivial. Issuers push the news out to promote their offerings, sometimes resulting in excessive and unfiltered news and hype. This makes it challenging to generate accurate signals for a trading strategy. It can be helpful to have a tool that can track news and provide indications as to whether, and by how much, a stock price is likely to move from its offering price in the first few days of trading.

Developing a strategy for trading IPOs and secondaries is not trivial. Issuers push the news out to promote their offerings, sometimes resulting in excessive and unfiltered news and hype. This makes it challenging to generate accurate signals for a trading strategy. It can be helpful to have a tool that can track news and provide indications as to whether, and by how much, a stock price is likely to move from its offering price in the first few days of trading.

Triad Securities’ “New Issue Service” provides a select view of IPOs and Secondaries and how they are affected by variables in the global financial markets. It includes proprietary consensus reports indicating the anticipated pricing of IPOs or Secondaries. These reports provide indications on deals, projected first day prices or price ranges on IPOs and a consensus indicator on secondaries.

Triad’s New Issue Service highlights subtle changes in the new issue and secondary markets from the moment of filing through pricing.

Can We Generate Alpha with IPO Consensus Data?

Our Quantitative Research Team sought to determine if there are opportunities to generate alpha in US equities, using Triad data as a basis for market movement prediction after IPO events or after secondaries pricing.

We loaded Triad data and associated market data into Deltix TimeBase and then developed, tested and refined candidate trading strategies in Deltix QuantOffice. The strategies were back-tested on in-sample data for the years 2008-2014, while 2015 data was included for out-of-sample testing.

The Trading Strategies

For the first strategy, based on Triad’s consensus data for IPOs, we entered long or short positions one week after the IPO. For the second strategy, based on Triad’s consensus data for secondaries, we entered long positions only, but hedged the positions with the SPY ETF. Back-testing showed that the two strategies (the first for IPOs, the second for Secondaries) had Sharpe Ratios of 2.11 and 2.73 with average profits per share of $0.20 and $0.09 respectively for the eight years of 2008 to 2015.

Implications of the Research

The results from this research demonstrated that there are opportunities to generate alpha using Triad’s Consensus Data for IPOs and Secondaries. Based on these results, we think firms would find it worthwhile to invest in further research. For example, firms might want to experiment with different time periods after the IPO, various holding periods, different hedging strategies, and/or alternative execution approaches.

QuantOffice makes it easy to implement and back-test these variations. Utilizing QuantServer in addition allows users to deploy the strategies for live simulation and production trading.

You can register and download the research study here.

You might also be interested in the research we published last quarter where we tested whether it was possible to generate alpha using earnings date revisions data from Wall Street Horizons.